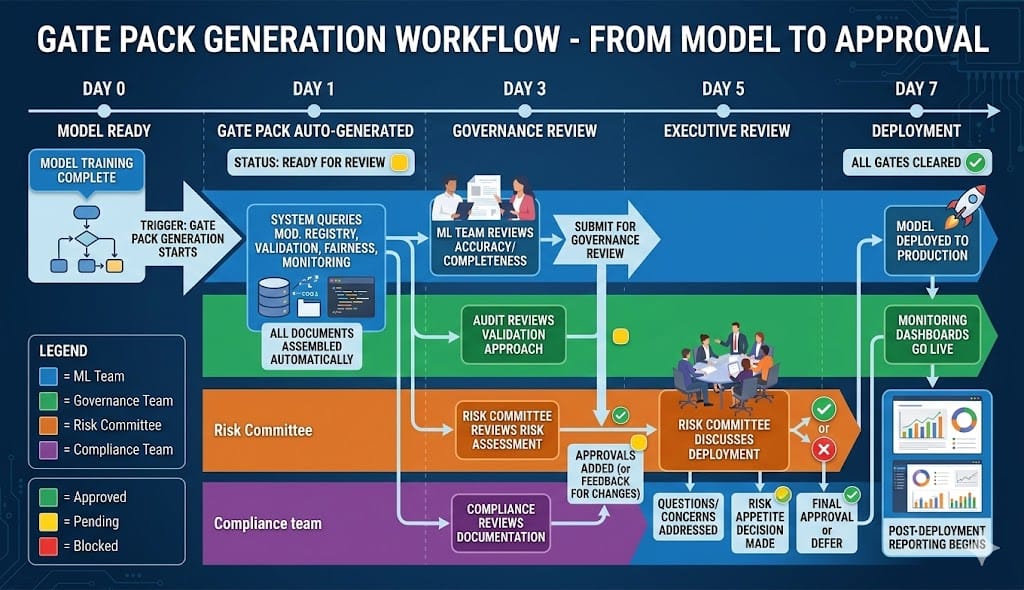

Quick Recap: Your model is trained, validated, fair, and accurate. But it can't ship until compliance approves it. Approval requires 40+ documents in specific formats. Building these manually takes weeks. Here's how to automate the gate pack generation—create the evidence templates once, let scripts populate them automatically from your model, validation, and monitoring systems.

The Gate Pack Problem (Why Models Get Stuck)

Your ML team finishes training a credit risk model. They think: "We're done. Let's deploy."

Then someone asks: "Where's the gate pack?"

Gate pack = the evidence portfolio proving the model is safe and fair to deploy.

Required documents:

Model card (what is it, how does it work, limitations)

Validation report (was it tested? Results?)

Fairness audit (is it fair? Disparities measured?)

Backtesting results (would it have worked historically?)

Monitoring plan (how will we catch failures?)

Incident response runbook (what if something breaks?)

Regulatory compliance checklist (does it meet Fed/EBA/FCA requirements?)

Risk assessment (what are the risks?)

Governance approvals (who signed off?)

Training documentation (who built it, how, when?)

Data lineage (where did training data come from?)

Feature documentation (what's in the model?)

Inference architecture diagram (how does it run in production?)

Security assessment (is it secure?)

Explainability documentation (how do we explain decisions?)

Performance dashboards (proof it works)

Audit trail (immutable decision logs) ... and 20+ more

Compliance says: "Show me all of this. In specific format. For the audit committee."

Your team spends 4 weeks copying data into Word documents, hand-formatting tables, manually writing summaries.

By the time it's ready, the model training script needs updating because the data changed.

Banks that solve this use gate pack generators—automated systems that:

Query your model registry (what models exist?)

Query your validation system (what are the validation results?)

Query your monitoring (what are the current fairness metrics?)

Populate documentation templates automatically

Generate a consistent, auditable gate pack in minutes

The Gate Pack Structure (What Regulators Actually Want)

Gate packs have a standard structure that regulators recognize:

Section 1: Model Identity & Documentation

What regulators need: Proof this is a real model with clear ownership and governance.

Proof points:

Model name, version, creation date, last updated date

Model owner (name, role, contact)

Governance approval chain (who approved this model for deployment?)

Business purpose (what problem does this solve?)

Model lineage (previous versions, how did we get here?)

Data dictionary (what features does the model use?)

Template format:

MODEL IDENTITY & GOVERNANCE

============================

Model Name: Credit Risk Scorer v2.4

Model Version: 2.4.1

Created: 2025-02-15

Last Updated: 2025-02-22

Training Data Cutoff: 2025-01-31

Ownership Chain:

Development Owner: Alice Chen, Senior ML Engineer, ML Platform Team

Risk Owner: Bob Singh, Credit Risk Manager, Risk Management

Governance Owner: Carol Martinez, Head of AI Governance

Approval Chain:

✓ Governance approval: Feb 22, 2025 (Carol Martinez)

✓ Risk approval: Feb 20, 2025 (Bob Singh)

✓ Compliance approval: Pending

✓ Audit approval: Pending

Business Purpose:

Automate credit risk assessment for consumer lending decisions.

Target: Replace manual scorecard process (current: 2 hours per application).

Expected benefit: $1.2M/year in process efficiency.

Previous Versions:

v2.3 - Deployed Dec 2024 (deprecated - replaced by v2.4)

v2.2 - Deployed Sep 2024 (deprecated - fairness issues)

v2.1 - Validation failed (data quality issues)This whole section is auto-generated from your model registry.

Section 2: Validation Results (Proof It Works)

What regulators need: Evidence the model was tested before deployment.

Proof points:

Accuracy metrics (overall and by demographic)

Backtesting results (would it have worked historically?)

Fairness metrics (is it fair?)

Stress testing (how does it perform under extreme conditions?)

Robustness testing (does it handle edge cases?)

Template format:

VALIDATION RESULTS

==================

Accuracy Metrics (Hold-out test set):

Overall Accuracy: 94.2%

Precision: 92.8%

Recall: 93.1%

AUC-ROC: 0.894

Accuracy by Demographic:

Male: 94.5% (approved 52%, accuracy 94%)

Female: 93.9% (approved 50%, accuracy 93%)

Disparity: 0.6% (within threshold ✓)

Backtesting Results (18 months historical data):

Model accuracy on past data: 93.8%

Default prediction accuracy: 89.2%

Prediction calibration error: 0.2% (excellent)

Conclusion: Model is reliable ✓

Fairness Validation:

Demographic Parity: 3.2% disparity (threshold: 5%) ✓

Equalized Odds: 2.1% disparity (threshold: 3%) ✓

Disparate Impact Ratio: 89% (threshold: 80%) ✓

Unexplained Disparity: 1.2% (threshold: 2%) ✓

Stress Testing (Recession Scenario):

Model accuracy in stressed data: 89.1% (vs. 94% baseline)

Fairness in stressed data: 3.8% disparity (stable)

Conclusion: Model degrades gracefully ✓

Sign-off:

Validation completed by: Alice Chen (ML Engineer)

Validation review by: Carol Martinez (Governance)

Date: 2025-02-22This is auto-generated from your validation system (Weights & Biases, Kubeflow, or custom pipeline).

Section 3: Fairness & Bias Documentation

What regulators need: Proof the model doesn't discriminate.

Proof points:

Fairness metrics by demographic (approval rate, accuracy, false positive rate)

Root cause analysis (why does disparity exist?)

Mitigation steps (what did you do about it?)

Regulatory compliance (does it meet Fed/EBA/FCA standards?)

Template format:

FAIRNESS & BIAS AUDIT

=====================

Demographic Groups Monitored:

Protected characteristics: Gender, Race, Age

Additional slices: Geographic region, Employment type, Income bracket

Approval Rate Parity:

Female: 50.2%

Male: 50.5%

Disparity: 0.3% (threshold: 5%) ✓ GREEN

Accuracy by Group:

Female: 93.9%

Male: 94.5%

Disparity: 0.6% (threshold: 5%) ✓ GREEN

False Positive Rate (Bad approvals) by Group:

Female: 4.2%

Male: 4.1%

Disparity: 0.1% (threshold: 2%) ✓ GREEN

Root Cause Analysis for Approval Disparity:

Observed disparity: 0.3%

Explained by income: +0.2% (women in this cohort slightly lower income)

Explained by credit score: -0.1%

Unexplained: 0.2% (acceptable threshold: 2%)

Conclusion: Disparity is explained by legitimate risk factors ✓

Regulatory Compliance:

Fed (Disparate Impact Ratio): 89% (threshold: 80%) ✓

EBA (Equalized Odds): 2.1% disparity (threshold: 3%) ✓

FCA (Outcome Parity): 0.3% disparity (threshold: 5%) ✓

Overall Assessment: FAIR ✓This is auto-generated from your fairness monitoring system (AIF360, Giskard, or custom).

Section 4: Monitoring & Incident Response

What regulators need: Proof you'll catch failures quickly and respond.

Proof points:

Monitoring dashboard (how will you track model health?)

Alert thresholds (when do you escalate?)

Incident response runbook (who does what when things break?)

Escalation matrix (who calls who?)

Template format:

MONITORING & INCIDENT RESPONSE

===============================

Production Monitoring Plan:

Metric Frequency Threshold Alert Level

─────────────────────────────────────────────────────────────────────

Model Accuracy Daily Drop >5% RED → Halt

Approval Rate Daily Change >10% YELLOW → Investigate

Fairness Disparity Weekly Increase >3% RED → Investigate

Prediction Confidence Daily Drop >10% YELLOW → Investigate

Infrastructure Health Continuous Any failures RED → Page on-call

Escalation Matrix:

RED alert → Incident Commander on-call (0-15 min)

YELLOW alert → ML Lead + Risk Manager (within 8 hours)

Both escalate to Risk VP if RED persists >2 hours

Incident Response Runbook:

1. Verify alert is real (2 min)

2. Classify incident (autonomy level, scope, criticality) (2 min)

3. Execute action (halt/pause/investigate) (1 min)

4. Notify stakeholders (2 min)

5. Investigate root cause (30 min)

6. Propose remediation (30 min)

7. Execute fix (1-4 hours depending on severity)

8. Post-mortem (within 24 hours)

Dashboard Location: https://monitoring.internal/credit-risk-v2.4

Contact for alerts: [email protected]

Sign-off:

Runbook approved by: Bob Singh (Risk Manager)

Escalation approved by: Carol Martinez (Governance)

Date: 2025-02-22This is auto-generated from your monitoring system (Grafana, Prometheus, custom dashboards).

Section 5: Governance & Risk Assessment

What regulators need: Proof executives understand the model and approved it.

Proof points:

Risk assessment (what are the risks?)

Business case (why are we deploying this?)

Risk mitigation (how are we managing these risks?)

Governance approvals (who signed off?)

Template format:

RISK ASSESSMENT & GOVERNANCE

=============================

Executive Summary:

Model: Credit Risk Scorer v2.4

Deployment scope: Consumer lending, up to $500K per applicant

Volume: ~5,000 decisions per month

Risk level: MEDIUM (regulated financial decision, fairness-sensitive)

Key Risks:

Risk 1: Accuracy degrades in recession

- Mitigation: Stress testing completed ✓, monitoring in place ✓

- Residual risk: LOW

Risk 2: Model is less accurate for minorities (fairness risk)

- Mitigation: Fairness audit completed ✓, disparity <1% ✓

- Residual risk: LOW

Risk 3: Model gets hacked or adversarially manipulated

- Mitigation: Input validation, rate limiting, evidence logging

- Residual risk: LOW

Risk 4: Data quality degrades after deployment

- Mitigation: Automated data validation, alerts configured

- Residual risk: MEDIUM (requires monitoring)

Risk Appetite Decision:

Risk committee approved this model despite residual risks.

Residual risk is acceptable for expected benefit of $1.2M/year.

Governance Approvals:

✓ Development sign-off: Alice Chen (Feb 22, 2025)

✓ Risk Manager sign-off: Bob Singh (Feb 20, 2025)

✓ Governance sign-off: Carol Martinez (Feb 22, 2025)

✓ Compliance review: Pending

✓ Audit review: Pending

✓ Risk Committee: Pending (scheduled for Feb 28)

Release gates:

Before deployment: All approvals required

Deployment decision: Risk Committee sign-off only

Post-deployment: Monthly risk reportingThis is partially auto-generated (approvals from workflow system) and partially manual (risk committee sign-off).

Building the Gate Pack Generator (Implementation)

The gate pack generator is a system that:

Queries your model registry (MLflow, Kubeflow, or custom DB)

Queries your validation results (test metrics, fairness audit, backtesting)

Queries your monitoring (current model health)

Merges into templates

Generates PDF/Word for approval

Simple Implementation (Python + Templating)

python

from datetime import datetime

from jinja2 import Template

import json

import requests

class GatePackGenerator:

def __init__(self, model_name, model_version):

self.model_name = model_name

self.model_version = model_version

self.data = {}

def fetch_model_metadata(self):

"""Query model registry for model info"""

response = requests.get(

f"https://mlflow.internal/api/models/{self.model_name}/versions/{self.model_version}"

)

self.data['model'] = response.json()

def fetch_validation_results(self):

"""Query validation system for test results"""

response = requests.get(

f"https://validation.internal/results/{self.model_name}/{self.model_version}"

)

self.data['validation'] = response.json()

def fetch_fairness_audit(self):

"""Query fairness monitoring for current metrics"""

response = requests.get(

f"https://fairness.internal/audit/{self.model_name}/{self.model_version}"

)

self.data['fairness'] = response.json()

def fetch_monitoring_config(self):

"""Query monitoring system for alerts/thresholds"""

response = requests.get(

f"https://monitoring.internal/config/{self.model_name}"

)

self.data['monitoring'] = response.json()

def generate_gate_pack(self):

"""Assemble all templates into final document"""

# Load templates

with open('templates/model_identity.md') as f:

identity_template = Template(f.read())

with open('templates/validation.md') as f:

validation_template = Template(f.read())

with open('templates/fairness.md') as f:

fairness_template = Template(f.read())

with open('templates/monitoring.md') as f:

monitoring_template = Template(f.read())

# Render each section

sections = []

sections.append(identity_template.render(

model=self.data['model'],

generated_at=datetime.now().isoformat()

))

sections.append(validation_template.render(

validation=self.data['validation']

))

sections.append(fairness_template.render(

fairness=self.data['fairness']

))

sections.append(monitoring_template.render(

monitoring=self.data['monitoring']

))

# Combine into final document

full_document = "\n\n---\n\n".join(sections)

# Add cover page

cover = f"""

# DEPLOYMENT GATE PACK

## Model: {self.model_name} v{self.model_version}

Generated: {datetime.now().strftime('%Y-%m-%d %H:%M:%S')}

Status: READY FOR REVIEW

This document certifies that the above model has completed all

validation, fairness, and governance requirements for production deployment.

Approvers required:

[ ] Compliance Officer

[ ] Risk Committee Chair

[ ] Audit Lead

"""

return cover + "\n\n" + full_document

def generate_pdf(self, output_path):

"""Generate final PDF for submission"""

markdown = self.generate_gate_pack()

# Convert markdown to PDF

# (using pandoc or weasyprint)

import subprocess

subprocess.run([

'pandoc', '-f', 'markdown', '-t', 'pdf',

'-o', output_path

], input=markdown.encode(), check=True)

return output_path

# Usage

generator = GatePackGenerator('credit_risk_scorer', 'v2.4')

generator.fetch_model_metadata()

generator.fetch_validation_results()

generator.fetch_fairness_audit()

generator.fetch_monitoring_config()

pdf_path = generator.generate_pdf('credit_risk_v2.4_gate_pack.pdf')

print(f"Gate pack ready: {pdf_path}")

When to Regenerate Gate Packs

Gate packs aren't static. Regenerate when:

Model changes (new version deployed)

Validation results change (fairness audit updated)

Monitoring reveals drift (new issues discovered)

Regulations change (new compliance requirements)

Example:

Regular schedule:

Initial gate pack: Before deployment

Monthly review: Update fairness metrics, confirm monitoring

Quarterly audit: Full regeneration with new validation

On-demand:

Model update: Regenerate immediately

Fairness breach: Regenerate with root cause analysis

Regulator request: Regenerate within 24 hoursLooking Ahead (2026-2030)

2026-2027: Gate pack generation becomes standard

Regulators expect consistent documentation format

Automation required (manual gate packs disappear)

Integration with model registries becomes default

2027-2028: Gate packs become dynamic

Not static PDFs but living documents

Updated continuously as model metrics change

Regulators can query gate pack data via API

2028-2030: Gate pack standards emerge

Industry convergence on gate pack structure

Regulatory guidelines specify required sections

Automated compliance checking against standards

HIVE Summary

Key takeaways:

Gate packs are the evidence portfolio proving a model is safe to deploy. They're 40+ documents covering validation, fairness, monitoring, and governance.

Manual gate pack creation takes weeks and is error-prone. Automated gate pack generators query your systems, populate templates, produce consistent documentation in minutes.

Gate pack sections are auto-generated from existing systems (model registry for metadata, validation pipeline for test results, fairness monitoring for bias metrics, monitoring system for alerts).

Gate packs must be regenerated when models change, validation updates, or new risks emerge. They're living documents, not static deliverables.

Gate pack generation is the bridge between "model ready" and "approved for deployment." It enforces governance and proof before production.

Start here:

If you don't have gate packs: Build a template. Start with the five sections: Identity, Validation, Fairness, Monitoring, Governance. Manually fill once, then automate.

If you have gate packs but they're manual: Identify which sections are auto-generateable (most validation + fairness sections). Build the generator for those. Save weeks of manual work.

If you have multiple models: Gate pack generator scales. Once built, generates packs for all models in minutes. Store all packs in audit trail for compliance.

Looking ahead (2026-2030):

Gate pack generation becomes regulatory expectation. Auditors will ask "how are you generating these?" Automation = compliance proof.

Dynamic gate packs emerge. Not PDFs but living documents that update as model metrics change.

Industry standards converge. Regulatory guidance clarifies required gate pack sections and formats.

Open questions:

What's the minimal gate pack (can you deploy with less documentation)? (No—regulators want comprehensive evidence. Minimal would be 20+ documents.)

How often should you regenerate? (Before deployment + monthly + on any significant change. Quarterly minimum for active models.)

Who owns the gate pack approval? (Risk Committee owns the decision. Compliance/Audit verify completeness. Governance Chair signs off.)

Jargon Buster

Gate Pack: Complete evidence portfolio proving a model is safe and fair to deploy. Why it matters in BFSI: Regulators require this before you deploy. Models can't ship without it. It's your approval evidence.

Model Card: Documentation of what a model does, how it works, its limitations. Why it matters in BFSI: Required by regulators and auditors. Explains the model to non-technical stakeholders.

Validation Report: Results of testing: accuracy, fairness, backtesting, stress testing. Why it matters in BFSI: Proof the model was tested before deployment. Regulatory requirement.

Fairness Audit: Analysis of whether the model discriminates. Approval rates by demographic, root cause analysis of disparities. Why it matters in BFSI: Fed, EBA, FCA all require this. Proof you didn't deploy discrimination.

Risk Assessment: Identification of risks (accuracy degradation, fairness violations, security issues) and mitigation plans. Why it matters in BFSI: Risk committee uses this to decide if risk is acceptable.

Governance Approvals: Sign-offs from Risk, Compliance, Audit proving they reviewed the model. Why it matters in BFSI: Audit trail showing who knew what and when. Regulatory requirement.

Gate: Decision point requiring documentation before proceeding. Why it matters in BFSI: Gates prevent untested models from deploying. Gate pack is evidence gate cleared.

Incident Response Runbook: Step-by-step procedure for responding when model fails. Who calls who, what they do, timeline. Why it matters in BFSI: Required to deploy. Proves you're prepared for failures.

Fun Facts

On Manual Gate Packs: A bank had 12 ML models in production. Each gate pack was maintained in Excel + Word docs. When regulators asked "show me fairness audit for all models," compliance spent 3 weeks assembling data (asking each team, copying into Word, formatting). By the time it was ready, two models had updated validation results. Lesson: Automation is not just convenience, it's compliance infrastructure.

On Gate Pack Surprises: One bank automated gate pack generation beautifully. Then realized their data had a 2-week lag (fairness metrics weren't real-time). Gate packs looked current but were 2 weeks old. Regulators noticed. Now they update data hourly. Lesson: Automated systems are only good as their data sources.*

For Further Reading

Model Cards for Model Reporting (Google Research, 2019) | https://arxiv.org/abs/1810.03993 | Framework for documenting models. Foundation for gate pack model card section.

Model Risk Management Governance (Federal Reserve, 2025) | https://www.federalreserve.gov/publications/sr2501.pdf | Fed expectations for model documentation and governance gates.

Documenting Machine Learning Deployments (MLOps Community, 2024) | https://mlops.community/guide-to-ml-documentation | Practical guide to documenting models for production and compliance.

Model Governance Frameworks (EBA, 2026) | https://www.eba.europa.eu/regulation-and-policy/artificial-intelligence | European framework for AI governance and documentation requirements.

Building Audit-Ready ML Systems (DataRobot, 2024) | https://www.datarobot.com/blog/audit-ready-ml | Practical guide to building systems that produce audit evidence automatically.

Next up: Week 18 Sunday dives into "Fairness Metrics and Bias Ratios in Finance"—the theory behind different fairness definitions and how to choose which metrics your models should optimize for.

This is part of our ongoing work understanding AI deployment in financial systems. If you're building gate packs or automating governance documentation, share your approach—what sections did you prioritize? What was hardest to automate?