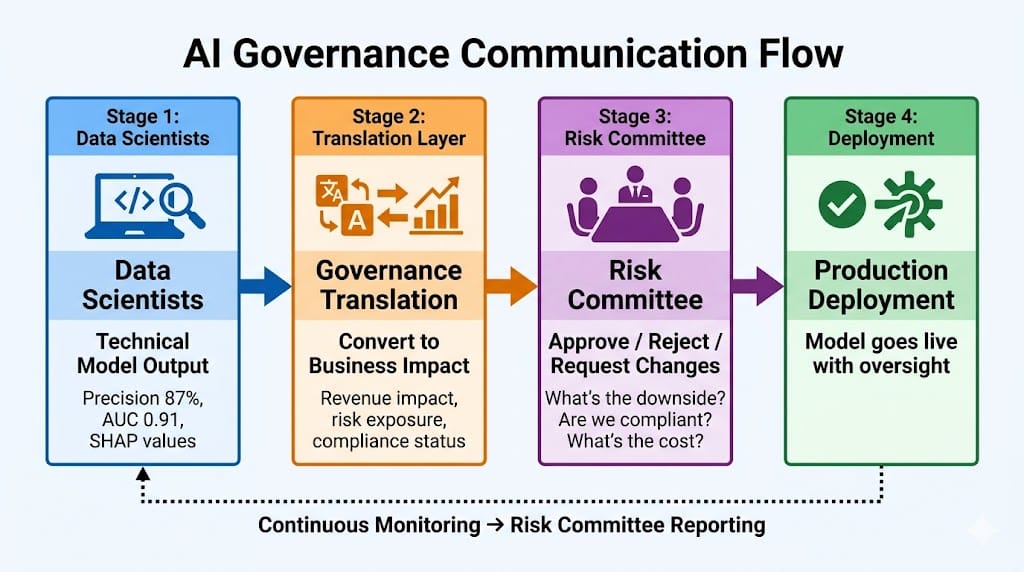

Quick Recap: Risk committees don't speak Python or understand confusion matrices. They speak business risk, regulatory exposure, and stakeholder impact. Here's how to translate AI model outputs into narratives that non-technical governance bodies can actually act on.

The Question

The Chief Risk Officer (CRO) sat across from the data science lead, staring at a dashboard filled with metrics: precision 0.87, recall 0.82, F1 score 0.84, AUC-ROC 0.91.

"So… is this model good or bad?" the CRO asked.

The data scientist blinked. "It's good. Very good, actually. The AUC is—"

"I don't know what AUC means," the CRO interrupted. "What I need to know is: If we deploy this model, what happens when it's wrong? How many customers get denied incorrectly? How much money do we lose? What does the regulator say when we explain this to them?"

The data scientist paused. The model was technically excellent. But none of the metrics on the dashboard answered the CRO's questions.

"Let me… reframe this," the data scientist said, closing the technical dashboard.

This is the reality of AI governance in 2026: Technical excellence doesn't matter if you can't explain it to the people who approve deployments. Risk committees don't care about AUC scores. They care about business impact, regulatory risk, and what happens when things go wrong.

Why Risk Committees Need Different Narratives

What Risk Committees Actually Do

Role: Oversee enterprise-wide risks and approve high-stakes decisions (new products, major technology deployments, regulatory compliance strategies).

Composition: Typically senior executives from:

Chief Risk Officer (CRO)

Chief Compliance Officer (CCO)

Chief Financial Officer (CFO)

Chief Legal Officer (CLO)

Chief Technology Officer (CTO)

Head of Internal Audit

Their mandate: Ensure the bank doesn't take on unacceptable risks. If an AI model creates regulatory exposure, reputational damage, or financial loss, the risk committee is accountable.

What They Care About (Not What Data Scientists Think They Care About)

Risk committees care about:

Downside scenarios: What happens when the model fails? (not "what's the average performance?")

Regulatory exposure: Will regulators approve this? What documentation do we need?

Stakeholder impact: Who gets hurt if this goes wrong? (customers, shareholders, employees)

Remediation costs: If we have to fix this later, what does it cost in time, money, reputation?

Alternatives: What happens if we don't deploy this model? What's the counterfactual?

Risk committees do NOT care about:

Precision/recall/F1 scores (unless translated to business impact)

Algorithm names (BERT, XGBoost, Random Forest mean nothing to them)

Technical implementation details (how many layers, what learning rate)

Why this matters in BFSI: Every AI deployment requires risk committee approval. If you can't translate technical metrics into their language, your model doesn't get deployed—no matter how good it is.

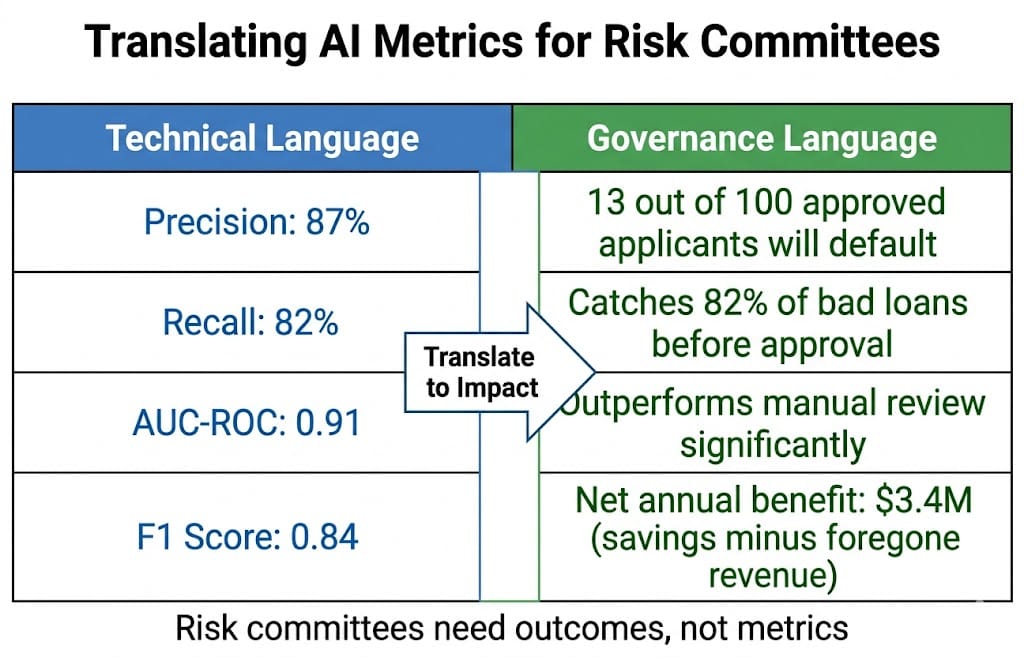

The Translation Framework: Technical → Governance

Step 1: Replace Metrics with Outcomes

Bad (Technical Language): "The model achieves 87% precision and 82% recall with an AUC-ROC of 0.91."

Good (Governance Language): "Out of every 100 loan applications the model approves, 13 will default (precision 87%). Out of every 100 applicants who would have defaulted, the model catches 82 of them (recall 82%). The model performs significantly better than our current manual review process."

Even better (Business Impact): "Deploying this model will reduce credit losses by $4.2M annually by catching 82% of defaulters before approval. However, we'll also decline 13 good applicants per 100 approvals, costing approximately $800K in foregone interest revenue. Net benefit: $3.4M/year."

Why this works: Risk committees understand dollars, customer impact, and trade-offs. They don't understand AUC.

Step 2: Lead with Downside Scenarios

What risk committees ask first: "What happens when this goes wrong?"

Why: Their job is risk mitigation, not performance optimization. They assume things will fail and want to understand the blast radius.

How to present:

Bad: "The model is 91% accurate."

Good: "The model will make mistakes 9% of the time. Here's what those mistakes look like:

Type 1 error (false positive): Declining a good customer. Impact: Lost revenue (~$600 per declined loan). Mitigation: Human review for borderline cases.

Type 2 error (false negative): Approving a bad customer. Impact: Default loss (~$8,000 per bad loan). Mitigation: Secondary fraud check before final approval.

Worst-case scenario: If the model completely fails (e.g., software bug), we revert to manual underwriting within 4 hours. Backlog impact: 2-day delay for new applications."

Why this works: Risk committees want to know you've thought through failure modes and have mitigation plans. Presenting worst-case scenarios builds trust.

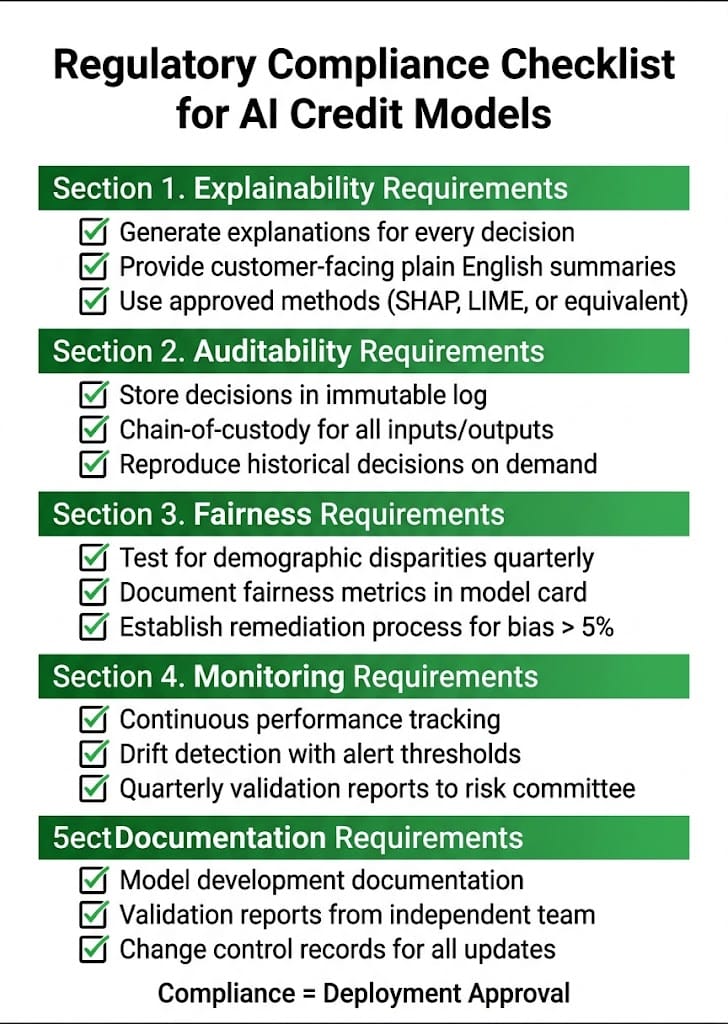

Step 3: Frame Regulatory Exposure Clearly

What risk committees need to know:

Does this model comply with current regulations?

What documentation do regulators require?

If regulations change, how easily can we adapt?

How to present:

Bad: "The model uses SHAP for explainability."

Good: "Regulatory requirement (Fed SR 11-7, EBA Guidelines 2026): Credit models must be explainable to customers and auditable by regulators.

Our approach:

Generate SHAP explanations for every decision (satisfies 'explainability' requirement)

Store explanations with predictions in immutable log (satisfies 'auditability' requirement)

Provide customer-facing summaries in plain English (satisfies 'transparency' requirement)

Regulatory risk: Low. We meet current Fed and EBA standards. If regulations tighten (e.g., require real-time explanations instead of post-hoc), we can adapt by switching from batch SHAP to faster approximation methods (tested, ready to deploy)."

Why this works: Risk committees don't want surprises. Showing you've mapped regulatory requirements and have contingency plans demonstrates governance maturity.

Step 4: Quantify Stakeholder Impact

Risk committees ask: "Who is affected, and how?"

Three stakeholder groups:

Customers: How does the model change their experience?

Employees: How does it change workflows?

Shareholders: How does it affect revenue, costs, reputation?

How to present:

Customer Impact:

"Loan decisions in 30 seconds (vs. 3 days manually)"

"13% of approved applicants will be declined who would have been approved manually (precision trade-off)"

"Customers can request human review for any AI decision (regulatory requirement, adds 2-day delay)"

Employee Impact:

"Underwriters shift from manual review (80% of time) to exception handling (20% of time)"

"Training required: 2-week program on AI decision oversight"

"Headcount impact: None (redeploy underwriters to complex cases, no layoffs)"

Shareholder Impact:

"Revenue: $3.4M net annual benefit (credit loss reduction minus foregone interest)"

"Reputation risk: Medium (if model shows bias, media exposure likely)"

"Mitigation: Quarterly fairness audits, proactive disclosure to regulators"

Why this works: Risk committees represent all stakeholders. Showing you've considered impact on each group demonstrates strategic thinking.

Step 5: Present Alternatives (Not Just Your Preferred Option)

Risk committees don't want to rubber-stamp your decision. They want to evaluate options and choose the best one.

How to present:

Option 1: Deploy AI Model

Benefit: $3.4M annual savings, faster decisions

Risk: 9% error rate, regulatory scrutiny, bias concerns

Timeline: 3 months to production

Option 2: Hybrid (AI + Human Review)

Benefit: $2.1M annual savings (lower due to human review costs), lower error rate (6%)

Risk: Slower decisions (2-day average), higher operational cost

Timeline: 4 months to production

Option 3: Status Quo (Manual Underwriting)

Benefit: Zero implementation risk, known process

Risk: Foregone $3.4M savings, slower decisions, scalability constraints

Timeline: N/A (current state)

Recommendation: Deploy Option 1 (AI Model) with quarterly fairness audits and human escalation path for borderline cases. Balances speed, cost savings, and risk mitigation.

Why this works: Presenting alternatives shows you're not married to one solution. Risk committees appreciate being given a choice, not a fait accompli.

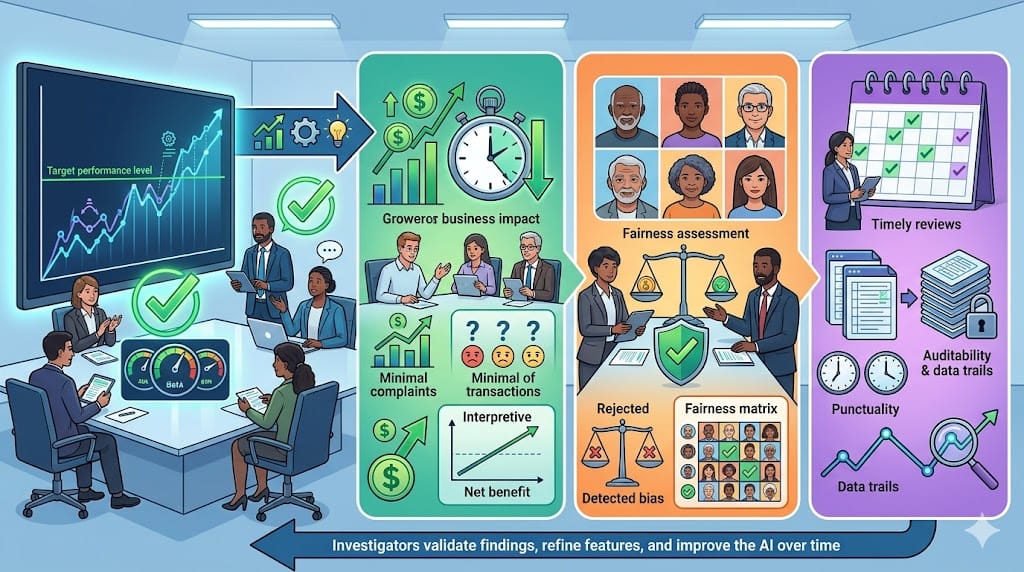

How Risk Committees Actually Interpret Dashboards

What's on a Typical AI Dashboard

Standard metrics (what data scientists build):

Model accuracy, precision, recall

Confusion matrix

Feature importance charts

Prediction distribution histograms

The problem: Risk committees look at these dashboards and see… nothing useful.

What Risk Committees Need Instead

Redesign the dashboard to answer their questions:

Question 1: "Is the model performing as expected?"

Show: Actual vs. expected performance over time (line chart)

Not: Confusion matrix (they don't know what True Positive means)

Question 2: "Are we seeing any warning signs?"

Show: Alerts triggered (e.g., "Prediction accuracy dropped 5% this month—investigating")

Not: Raw drift statistics (KL divergence = meaningless to them)

Question 3: "What's the business impact?"

Show: Revenue impact, cost savings, customer complaints related to AI decisions

Not: Feature importance (doesn't tell them about $$ impact)

Question 4: "Are we compliant?"

Show: Fairness audit results (e.g., "Gender disparity: 2.1% [within 5% tolerance]")

Not: Statistical p-values or bias ratios

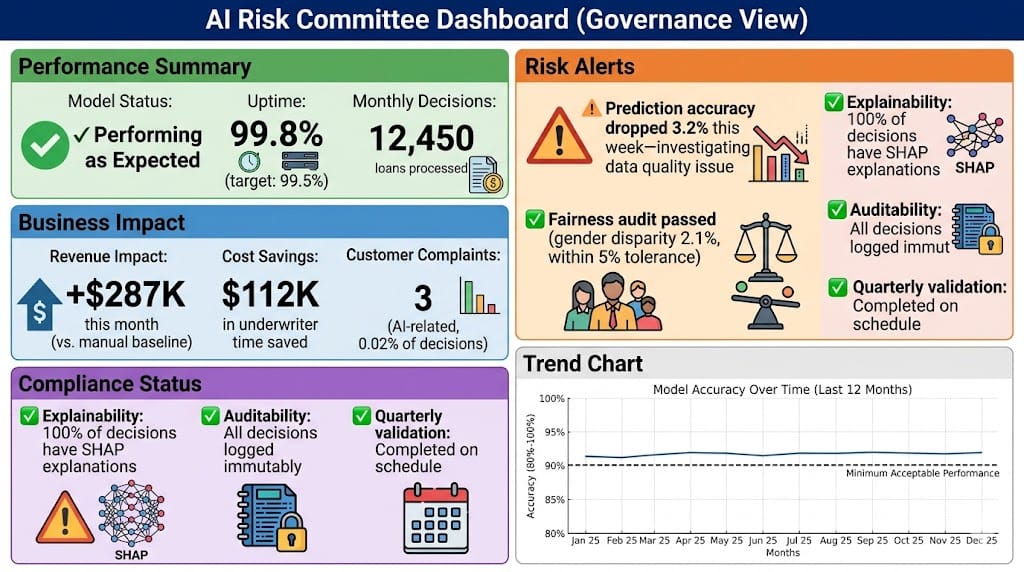

What Good Looks Like: Executive Summary Format

Instead of a 20-slide technical deck, provide a 1-page executive summary:

Model Name: Credit Risk Scoring Model v2.3

Business Objective: Reduce credit losses while maintaining loan approval speed

Performance Summary:

Deployed: June 2025

Decisions: 47,200 loans processed (6 months)

Accuracy: 91% (target: 90%)

Business impact: $2.1M in credit loss reduction (annualized)

Risk Status:

Regulatory compliance: ✓ Meets Fed, EBA, FCA requirements

Fairness: ✓ No demographic disparities > 5%

Customer complaints: 14 total (0.03%), all resolved via human review

Open Issues:

Prediction accuracy declined 3% in November due to data quality issue (resolved December 1)

Quarterly fairness audit scheduled for January 2026

Recommendation: Continue production deployment. No changes required.

Why this works: One page. Readable in 2 minutes. Answers all the questions risk committees care about.

How It Affects All Stakeholders

For Data Scientists

Before: Built technically excellent models that never got deployed because risk committees didn't understand them.

With governance translation: Learn to present models in business terms—dollars, risks, compliance—not just technical metrics.

The skill shift: Data scientists need to become "bilingual"—fluent in both technical ML and business risk language.

For Risk Committees

Before: Struggled to evaluate AI models because dashboards were incomprehensible.

With governance translation: Can actually assess AI risks, ask informed questions, and make deployment decisions confidently.

The practical impact: Risk committees approve more AI projects because they understand what they're approving.

For Compliance Officers

Before: Had to translate between data scientists and risk committees manually—acting as interpreters.

With governance translation: Data scientists present in governance-friendly terms directly, reducing compliance's translation burden.

The time savings: Compliance spends less time explaining models and more time on actual compliance work.

For Regulators

What they want: Evidence that the bank understands AI risks and has oversight processes.

Why governance translation matters: When regulators audit, they want to see that senior leadership (risk committees) understands and approves AI deployments. Technical documentation isn't enough—they want proof of informed governance.

What regulators look for (Fed, EBA, FCA 2025-2026):

Minutes from risk committee meetings showing AI model reviews

Evidence that non-technical executives asked informed questions

Documentation that risks were identified and mitigation plans approved

The regulatory value: Governance translation creates the audit trail regulators expect.

Regulatory & Practical Context (2025-2026 Baseline)

What Regulators Expect from Governance

Federal Reserve (SR 11-7, updated 2025):

AI models must have board-level oversight (risk committees count as board oversight)

Model risk management requires independent validation (not just data science self-assessment)

Quarterly reporting to senior management on model performance and risks

EBA (AI Act Implementation Guidelines, 2026):

High-risk AI systems (credit scoring, fraud detection) require documented governance approval

Risk committees must demonstrate understanding of AI risks (not just rubber-stamp approvals)

Annual audits must include review of governance meeting minutes

FCA (UK, AI Governance Framework 2025):

Senior managers accountable for AI systems under Senior Managers Regime

Risk committees must approve AI deployments before production

Documentation must show informed decision-making (not just technical sign-off)

The regulatory shift: It's not enough to have good models. You must prove senior leadership understands what they're approving.

Production Challenges (What Banks Actually Face)

Challenge 1: Data Scientists Don't Speak Business

Most data scientists are trained in technical ML, not business communication. Asking them to present to risk committees is like asking them to present in a foreign language.

What works (2025-2026 practice):

Pair data scientists with business analysts who translate

Train data scientists in "governance storytelling" (2-day workshop on translating metrics to impact)

Use templates (executive summary format, standardized dashboard designs)

Challenge 2: Risk Committees Don't Have Time for Long Presentations

Risk committees meet monthly, review 10-15 topics per meeting. AI model reviews get 15-20 minutes maximum.

The solution: One-page executive summaries + optional technical appendix (for deep dives if requested).

What doesn't work: 30-slide technical decks. Risk committees will skip them or ask to reschedule.

Challenge 3: Metrics Change Faster Than Governance Understanding

ML evolves quickly. New metrics (e.g., calibration error, fairness ratios) emerge constantly. Risk committees can't keep up.

The approach: Stick to stable, interpretable metrics for governance reporting:

Accuracy (% correct predictions)

Error rates (false positives, false negatives)

Business impact (revenue, cost, customer complaints)

Compliance status (pass/fail on regulatory requirements)

Avoid introducing: New statistical metrics every quarter. Risk committees need consistency to track trends.

Looking Ahead (2026-2030)

Trend 1: Automated Governance Dashboards (2026-2027)

Currently, data scientists manually create governance reports.

The shift: Automated dashboards that generate risk committee summaries in real-time.

How it works: AI systems log all decisions, performance metrics, and alerts. Governance dashboard pulls data automatically and formats it for risk committees (business impact, compliance status, alerts).

What's coming: Tools like DataRobot, H2O.ai, and AWS SageMaker adding "governance view" dashboards optimized for non-technical executives.

Trend 2: AI Governance as a Service (2027-2028)

The future: Third-party services that translate AI outputs for risk committees—"governance as a service."

How it works: Banks submit model performance data → Service generates executive summaries, compliance checklists, and risk assessments → Risk committees review pre-packaged governance reports.

Why this matters: Smaller banks (without dedicated ML governance teams) can still demonstrate regulatory compliance by outsourcing governance translation.

Trend 3: Regulatory Standardization of AI Reporting (2028-2030)

Current state: Every bank reports AI risks differently to risk committees.

The shift: Regulators (Fed, EBA, FCA) standardize AI governance reporting formats.

What's expected: Industry-wide templates for:

Model risk reports to boards/risk committees

Quarterly AI performance summaries

Incident reports for AI failures

Why this matters: Standardization reduces compliance burden and makes cross-bank comparisons easier for regulators.

HIVE Summary

Key takeaways:

Risk committees don't speak technical ML—they speak business risk, regulatory exposure, and stakeholder impact. Translate metrics into outcomes they understand.

Lead with downside scenarios, not average performance. Risk committees assume things fail and want to know the blast radius.

Present alternatives, not just your preferred option. Risk committees want to evaluate choices, not rubber-stamp decisions.

Replace technical dashboards with governance dashboards showing business impact, compliance status, and alerts—not precision/recall.

Start here:

If presenting to risk committee for the first time: Use the one-page executive summary format (business objective, performance, risks, recommendation). Skip the 30-slide technical deck.

If risk committee doesn't understand your dashboards: Redesign to answer their questions: "Is it working? What's the business impact? Are we compliant? What are the warning signs?"

If you're a data scientist: Learn governance storytelling—how to translate AUC-ROC to dollars, confusion matrices to customer impact, and drift statistics to business risks.

If you're compliance/risk: Create translation templates (executive summary, governance dashboard) so data scientists don't reinvent the wheel every time.

Looking ahead (2026-2030):

Automated governance dashboards (2026-2027) will generate risk committee summaries in real-time, eliminating manual report creation.

AI governance as a service (2027-2028) will let smaller banks outsource governance translation to third parties.

Regulatory standardization (2028-2030) will create industry-wide templates for AI risk reporting, reducing compliance burden.

Open questions:

What's the right level of technical detail for risk committees? Too much overwhelms them; too little risks uninformed decisions.

How do we train risk committees to ask better AI questions? Current governance often lacks depth because executives don't know what to ask.

Can governance translation be fully automated, or will it always require human judgment to frame narratives correctly?

Jargon Buster

Risk Committee: Board-level governance body that oversees enterprise risks and approves high-stakes decisions (new products, major technology deployments). Why it matters in BFSI: AI models require risk committee approval before production—no approval, no deployment.

Downside Scenario: Analysis of what happens when a system fails (error rates, customer impact, financial loss). Why it matters in BFSI: Risk committees focus on worst-case outcomes, not average performance—show you've planned for failure.

Stakeholder Impact: How a decision affects different groups (customers, employees, shareholders). Why it matters in BFSI: Risk committees represent all stakeholders and want evidence you've considered each group's interests.

Governance Translation: Converting technical AI metrics (precision, AUC, drift) into business terms (revenue impact, compliance status, customer complaints). Why it matters in BFSI: Risk committees can't evaluate models they don't understand—translation enables informed approval.

Executive Summary: One-page document summarizing business objective, performance, risks, and recommendation. Why it matters in BFSI: Risk committees have 15-20 minutes per topic—executive summaries deliver key information quickly.

Model Card: Standardized documentation format for AI models (purpose, performance, limitations, fairness testing). Why it matters in BFSI: Regulators increasingly expect model cards as part of governance documentation.

Independent Validation: Third-party review of AI model by team separate from developers (required by Fed SR 11-7). Why it matters in BFSI: Risk committees won't approve models without independent validation—shows governance rigor.

Board-Level Oversight: Senior leadership (board of directors or risk committee) reviewing and approving AI deployments. Why it matters in BFSI: Regulatory requirement under Fed, EBA, FCA frameworks—demonstrates accountability.

Fun Facts

On Dashboard Redesign: A large US bank discovered their AI governance dashboard had 47 metrics—precision, recall, AUC, calibration error, fairness ratios, drift statistics, and more. The CRO admitted in a 2025 interview: "I looked at that dashboard every month and understood maybe 10% of it. I approved models based on whether the data scientist seemed confident, not because I understood the metrics." After redesigning to show business impact (revenue, cost, compliance status), risk committee approval time dropped from 3 meetings (revisions requested) to 1 meeting (approved on first review). Lesson: Simplicity beats comprehensiveness for governance.

On Governance Storytelling: A data scientist at a European bank presented a fraud detection model to the risk committee in 2024 using technical language ("95% precision, 89% recall"). The committee asked for revisions—they didn't understand the impact. The second presentation opened with: "This model will save €8M annually by catching fraud earlier, but will also freeze 200 legitimate customer transactions per month, requiring manual review within 4 hours to avoid customer complaints. Net benefit: €7.1M after factoring in customer service costs." Approved immediately. Lesson: Lead with outcomes, not metrics—risk committees speak dollars and customer impact.

For Further Reading

Federal Reserve SR 11-7: Guidance on Model Risk Management (Federal Reserve, Updated 2025) | https://www.federalreserve.gov/supervisionreg/srletters/sr1107.htm | Official Fed guidance on model governance, validation, and board-level oversight requirements.

EBA Guidelines on ICT and Security Risk Management (European Banking Authority, 2026) | https://www.eba.europa.eu/regulation-and-policy/internal-governance/guidelines-on-ict-and-security-risk-management | European framework for AI governance, including risk committee approval processes.

Model Cards for Model Reporting (Google Research, 2024) | https://research.google/pubs/pub48120/ | Standardized documentation format for AI models—increasingly expected by regulators.

AI Governance for Financial Institutions (McKinsey, 2025) | https://www.mckinsey.com/industries/financial-services/our-insights/ai-governance-financial-institutions | Practical guide on building governance frameworks that satisfy regulatory expectations.

Translating AI for the Boardroom (Harvard Business Review, 2024) | https://hbr.org/2024/11/translating-ai-for-the-boardroom | Case studies on effective AI communication to non-technical executives.

Next up: We're moving to Week 22 Sunday: Model Selection as Strategic Decision-Making—how to weigh explainability, cost, and risk when choosing between competing AI models.

This is part of our ongoing work understanding AI deployment in financial systems. If you're presenting AI models to risk committees or struggling with governance translation, share your experience.